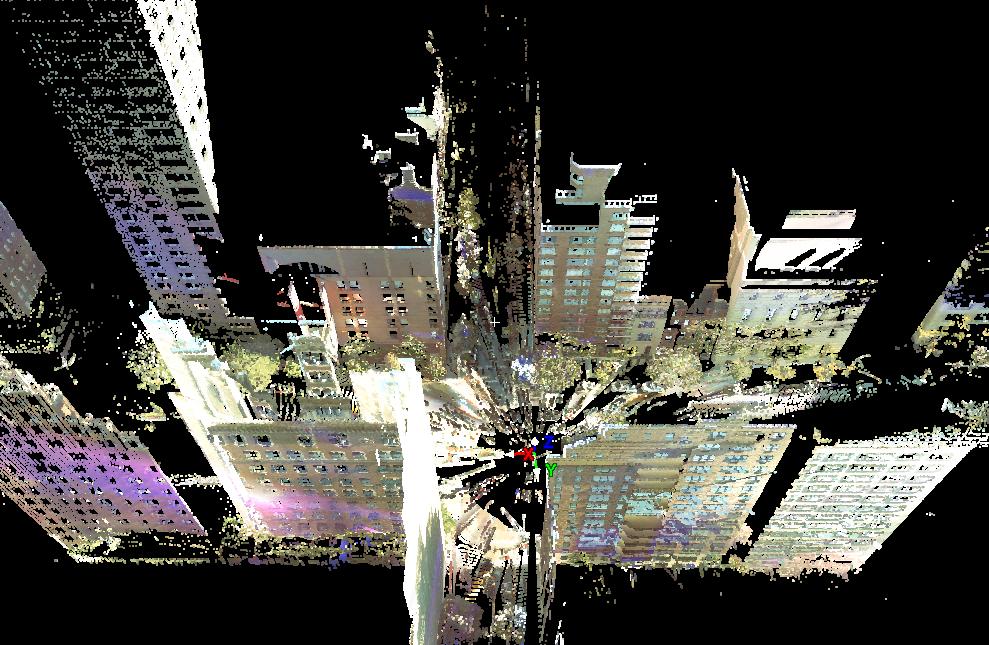

Figure: (a) 3D range scan of

Park Avenue and 70th street (scanner is a the center of the

intersection). Bird's-eye view. Data gathered by the Leica

ScanStation2 of our laboratory. (b) 3D texture-mapped model of

building at CCNY. Camera positions are shown (see IJCV 2008

paper in

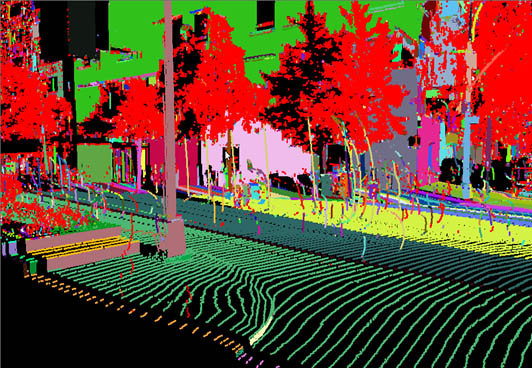

publications). (c)

Online classification of objects in urban scene (see 3DPVT

2010 paper).

Course Overview

Recent advances in computer hardware have made possible the

efficient rendering of realistic 3D models in inexpensive

PCs, something that was possible with high end visualization

workstations only a few years ago. This class will cover the field

of 3D Photography -the process of automatically creating 3D

texture mapped models of objects- in detail. We will concentrate on the topics at the

intersection of Computer

Vision and Computer Graphics that are relevant to

acquiring, creating, and representing 3D models of small objects

or large urban areas. Many very interesting research questions

need to be answered. For example: how do we acquire real shapes?

how do we represent geometry? can we detect similarities between

shapes? can we detect symmetries within shapes? how do we register

3D geometry with color images?, etc. Applications

that benefit by this technology include: image retrieval in digital libraries,

image search, face recognition, historical

preservation, urban planning, google-type maps, architecture, navigation, virtual reality,

e-commerce, digital cinematography, computer games, just to name a few.

The core of the class will

be a set of presentations of recent papers along with

introduction of fundamental topics. The research

facilities of the Vision and Graphics Laboratory will become available to

registered class participants. The research of our laboratory is

supported by the

National Science Foundation through three active NSF awards. So,

if you are interested for a research topic, please join the

class!

Course

Format

There will be a weekly class, with presentations by the

instructor. The presentations will introduce the basic concepts

and techniques of the field.

The grade will

be based upon the following:

60% for group/individual projects, 30% for final project and 10%

for class participation.

Prerequisites

Linear algebra, data structures and algorithms,

and C/C++ or Java programming. No prior knowledge of vision

is assumed. Courses such as image processing, computer graphics, and digital tomography are helpful

but are not required for the understanding of the material.

Topics

- Acquiring images: 2D and 3D sensors (digital cameras and laser

range scanners).

- Camera calibration.

- 3D- and 2D- image registration.

- Stereopsis.

- Optical flow.

- Segmentation.

- Geometry: representation of 3D models, simplification of 3D

models, detection of symmetry.

- Photometric Stereo.

- Image based rendering.

- Texture mapping.

Course Material

This class will be based on

recent publications and recent workshops. A set of seminars,

books, and journals are provided for your reference.

Computer

Vision Books:

Computer Vision: Algorithms and Applications, Richard

Szeliski, 2010: Online Version

Introductory Techniques for 3-D Computer Vision.

EmanueleTrucco and Alessandro Verri. Prentice Hall, 1998.

Robot Vision. B. K. P. Horn, The MIT Press, 1998 (12th

printing).

Computer Vision A Modern approach.

David

S. Forsyth, Jean Ponce. Prentice Hall 2003. Some

online content.

Three-Dimensional Computer Vision: A Geometric Viewpoint.

Olivier Faugeras, The MIT Press, 1996.

An Invitation to 3-D Vision. Yi Ma, Stefano Soatto, Jana

Kosecka, S. Shankar Sastry. Springer-Verlag, 2004.

Computer Vision. Linda Shapiro and George Stockman. Prentice

Hall, 2001.

Computer

Graphics Books:

Computer

Graphics, Principles and Practice. Foley, van Dam, Feiner,

and Hudges. Addison-Wesley, 1997.

3D Computer Graphics. Alan Watt. Addison-Wesley,

2000.

OpenGL Programming Guide. Mason Woo, Jackie Neider,

Tom Davis. Addison-Wesley, 1998.

Computer Vision and

Graphics Journals:

International Journal on Computer Vision.

Computer Vision and Image Understanding.

IEEE Trans. on Pattern Analysis and Machine Intelligence.

SIGGRAPH

(http://www.siggraph.org).